|

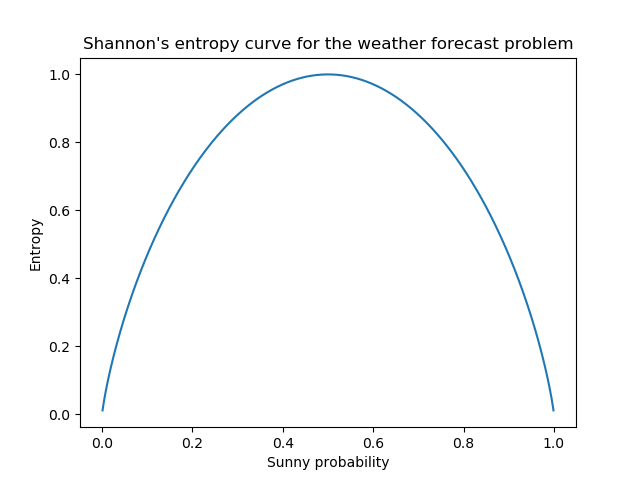

Shannon was also known as the ‘father of information theory’ as he had invented the field of information theory. Shannon, mathematician, and electrical engineer, published a paper on A Mathematical Theory of Communication, in which he had addressed the issues of measure of information, choice, and uncertainty. The term entropy was first coined by the German physicist and mathematician Rudolf Clausius and was used in the field of thermodynamics. The focus of this article is to understand the working of entropy by exploring the underlying concept of probability theory, how the formula works, its significance, and why it is important for the Decision Tree algorithm. It is a must to know for anyone who wants to make a mark in Machine Learning and yet it perplexes many of us. IntroductionĮntropy is one of the key aspects of Machine Learning. Tips: you should bookmark this tool so that you can use it in the future.This article was published as a part of the Data Science Blogathon. Simply click on the calculate button to get the answer. Just typing space or typing a comma at the end of each number will be fine.Īnd then you will be able to calculate your Function map single arrow. You must be confused about how you are going to type other numbers but you don’t need to worry too much because we have already mentioned how you will type numbers in them. You can see on your screen you have only one text box in this tool right but you have another different number. They can use it on the phone and they can use it on a desktop however they feel comfortable. Now as you can see this tool doesn’t require any registration and it's a totally free online tool that anyone can use from anywhere. This tool is really easy to use and even our tool has a really very simple layout so that it will be easy to understand for people. Shannon Entropy E = -∑i(p(i)×log2(p(i))) How to use this tool Shannon’s entropy So here is the formula for calculating the Shannon entropy. Shannon’s Entropy metric also suggests away of representing the knowledge within the calculated fewer number of bits. Whereas, Shannon’s entropy metric quantifies, among other things, the absolute minimum amount of storage and transmission needed for succinctly capturing any information (as against raw data), and in typical cases that amount is a smaller amount than what's required to store or transmit the raw data behind the knowledge. It doesn't succinctly capture the knowledge within the coin toss, e.g., whether the coin is biased or unbiased, and, if biased, how biased. the raw data like the result of the coin toss. However, this bit directly stores the worth of the variable, i.e. In digital storage and transmission technology, this Boolean variable is often represented during a single "bit", the essential unit of digital information storage/transmission. Whether the coin toss came up heads or not. We will use the variable to represent the data like the coin toss, viz., This information is often stored during a Boolean variable which will combat the values 0 or 1. for instance, information may be about the result of a coin toss. Storage and transmission of data can intuitively be expected to be tied to the quantity of data involved.

Shannon’s entropy quantifies the quantity of data during a variable, thus providing the inspiration for a theory around the notion of information. magnetic force on straight current carrying wire.Rotational and periodic motion calculators Numerical Analysis - Numerical Differentiation Tools Improper fraction to mixed number calculator.Mixed number to Improper fraction calculator.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed